Projects

Social Crowd Navigation

Many crowd navigation methods are often short-sighted and prone to the freezing robot problem. To tackle these problems, we propose a novel reinforcement learning planner that reasons about spatial and temporal relationships between the robot and the crowd. In addition, we incorporate human trajectory prediction into the planner to increase safety and social-awareness of the robot.

Relevant papers:

- HEIGHT: Heterogeneous Interaction Graph Transformer for Robot Navigation in Crowded and Constrained Environments, under review.

- Intention Aware Robot Crowd Navigation with Attention-Based Interaction Graph, ICRA 2023.

- Decentralized Structural-RNN for Robot Crowd Navigation with Deep Reinforcement Learning, ICRA 2021.

- Occlusion-Aware Crowd Navigation Using People as Sensors, ICRA 2023.

Autonomous Driving

Understanding the interactions and behaviors of surrounding drivers is essential for autonomous vehicles (AV). To this end, we propose novel networks to detect the abnormal drivers and predict driving styles in an unsupervised fashion, which improves navigation of AV. Besides this, we also build realistic multi-agent traffic simulations.

Relevant papers:

- Learning to Navigate Intersections with Unsupervised Driver Trait Inference, ICRA 2022.

- Structural Attention-Based Recurrent Variational Autoencoder for Highway Vehicle Anomaly Detection, AAMAS 2023.

- Combining Model-Based Controllers and Generative Adversarial Imitation Learning for Traffic Simulation, ITSC 2022.

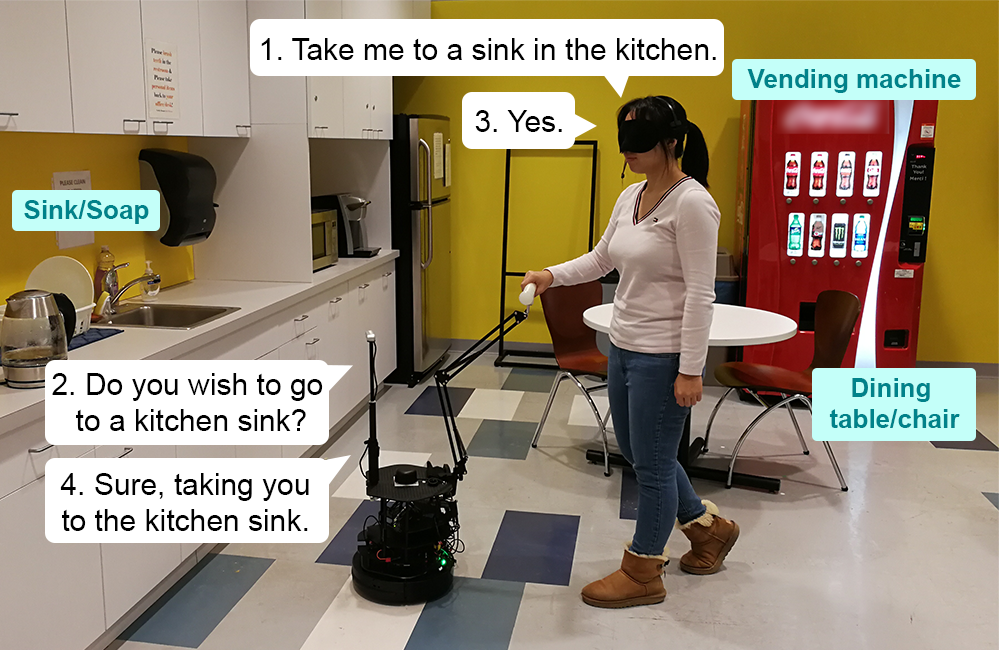

Instruction Following Robot & Visual-Language Grounding

To enable robots to serve humans and those with disabilities in everyday environments, the robots must understand spoken language and associate commands to entities in the environment. We pursue two directions to achieve speech command-following robots:

- Learning a visual-audio representation for RL skill learning without hand-engineered reward;

- Building a system with vision-language models to guide persons with visual impairments from place to place and enhance their knowledge of the environment.

User studies with real human subjects show that our systems are intuitive and easy to use.

Relevant papers:

- A Data-Efficient Visual-Audio Representation with Intuitive Fine-tuning for Voice-Controlled Robots, CoRL 2023.

- Learning Visual-Audio Representations for Voice-Controlled Robots, ICRA 2023.

- Robot Sound Interpretation: Combining Sight and Sound in Learning-Based Control, IROS 2020.

- DRAGON: A Dialogue-Based Robot for Assistive Navigation with Visual Language Grounding, RA-L 2024.

Manipulation

Real-world long-horizon manipulation problems have been a longstanding challenge in robotics. We model object dynamics with graph networks and utilize motion primitives to achieve effective manipulation. We demonstrate our methods in real world tasks such as stowing and scooping.

Relevant papers:

- Predicting Object Interactions with Behavior Primitives: An Application in Stowing Tasks, CoRL 2023.

- Multi-Step Planning for Granular Object Scooping via Graph Networks and Skill Search, in preparation 2024.

Machine Learning

While reinforcement learning (RL) is an appealing way to learn robot policies, it is prone to performance degradation under adversarial perturbations and sim2real gaps. We pursue two directions to address this problem:

- We improve the robustness of RL algorithms with adversarial training;

- We evaluate RL policies in novel domains with minimal rollout data using importance sampling and optimization.

Relevant papers:

- Robust Deep Reinforcement Learning with Adversarial Attacks, AAMAS Extended Abstract 2018.

- Off Environment Evaluation Using Convex Risk Minimization, ICRA 2022.